Integrating CRO: How to Ensure Conversion Rate Optimisation and Design & Development Work Together

The design of a site is a crucial part of its success. For a website to be attractive to users, it needs to be both visually appealing and also easy to use. Design and development changes can have a big impact on the conversion rate of a site, so it is vital that conversion rate optimisation (CRO) campaigns work in tandem with the design and development processes.

This post will highlight some of the key advantages of designers, developers, and optimisers working together, as well as what the different disciplines can learn from each other. It will also cover some of the potential pitfalls of integrating CRO campaigns into a design and development workstream, and suggest how these can be avoided.

How design can complement CRO work

Making site changes without going against brand guidelines

There are numerous reasons for restyling a page in an attempt to improve conversion rates. The font style and colour may be hard to read, the primary call to action (CTA) colour might be easy to miss and need to stand out more, or perhaps different sections of a form need to be differentiated from each other. These changes can run the risk of going against a company’s brand guidelines.

Designers often have a lot of experience working with brand guidelines and will be able to help to find a solution without breaking these guidelines – as long as the problem isn’t defined in a restrictive way. Clearly defining the issue will help to get the best possible results. Rather than suggesting designers ’make the CTAs pink‘ it is more useful to state the usability issue, for example, ’make the primary CTAs stand out from other page elements‘. This allows the designers to use their knowledge to achieve this objective within the brand guidelines that they’re working to.

Sharing learnings from experience with similar sites

Experienced designers and optimisers will have worked on a broad range of sites. This is an advantage – as the old saying goes, two heads are often better than one.

It’s beneficial to have industry-specific knowledge when designing and testing web pages as audiences and intent can vary greatly across sectors. For example, conversion wins on a travel website may not have the same impact on an insurance website. Having a solid understanding of audience preferences within a particular sector means more accurate CRO hypotheses and design proposals in the first place.

Working with vague criteria

While conversion optimisers can pinpoint specific barriers to conversions and create inspired solutions, some issues are less well defined but still equally important. Take this example from a remote user testing session that we ran earlier this year:

“The whole thing looks secure, and it’s telling me it’s been secured by Thawte. But the only thing as I said before really, I find the text maybe a little bit basic. Maybe if it was a little bit sharper, it might give me a little bit more confidence, not that I wouldn’t trust the site, but when you’re spending £1,000 on equipment then you really want it to look professional, completely professional.” - Tester on usertesting.com

This would naturally lead to a hypothesis that ‘making the site look more professional will increase conversions’, but what is ’more professional‘? This is an extremely subjective statement, yet this is not something that can simply be ignored. The experience of designers is key to interpreting this statement and turning it into actionable site modifications.

How CRO can complement the design process

Validating designs through scientific testing

As with any working relationship, trust is built over time. With new clients it can be difficult justifying the costs of redesigning a website. This is where split testing can back up a designer’s intuition with solid facts gained through scientific testing methods applied through an A/B testing platform.

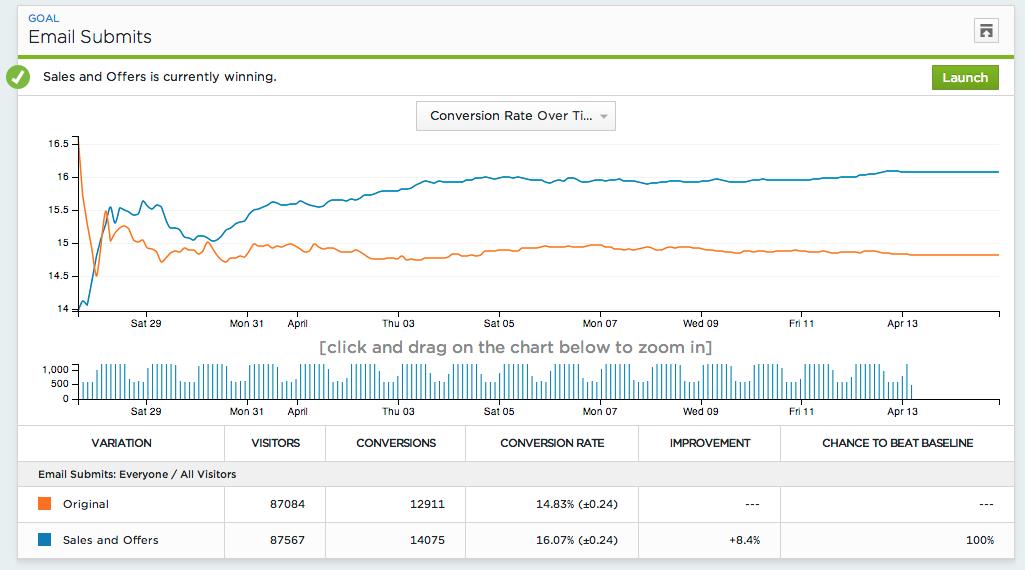

Optimizely’s reporting system helps to find statistically significant test results

Designers don’t need to try and justify themselves by explaining how much experience they have, they can simply point to the statistics that show how much their new design has out-performed the old one. Through uplift calculations based on the improved conversion rates it also then becomes possible to quantify the return on investment (ROI) that the design changes have delivered.

Reducing bias

Bias is everywhere and is a subject that could justify a blog series of its own! One simple way to reduce bias is to hand over the assessment and quality testing of designs to a CRO team. While conversion optimisers may have more training and knowledge about how to avoid biases, this is more about providing an outside perspective from someone who hasn’t worked hard to create the designs, and therefore doesn’t have as much attachment to them.

Sharing testing experience

Keeping a resource of previous test results is a great way to share learning experiences. Designers are able to dip in and draw ideas from previous winning variations that they know have worked well in the past.

There’s never any certainty that just because a design worked well on one site, it will perform equally well on another, so split testing is still required. However, as the database of A/B test results grows, it will become easier to find examples of designs which match the target industry and demographics, and therefore have a greater chance of performing as predicted.

Ollie Battams, gives his thoughts on how design and CRO can work well together:

" CRO is an integral part of finding ways to refine user experience. As a creative designer and front-end developer, insight gathered from user testing or split testing is invaluable for the work we produce. Testing different layouts and user journeys, as well as tests around key elements on pages, like CTA buttons, can help us produce impactful websites that perform for our clients.

"CRO and web design are closely connected. At Fresh Egg, these two disciplines work together to ensure CRO tests are set up correctly to produce the conversion data we need. I think this integrated approach is what sets Fresh Egg apart from most agencies."

How web development can complement CRO work

Developers can do more

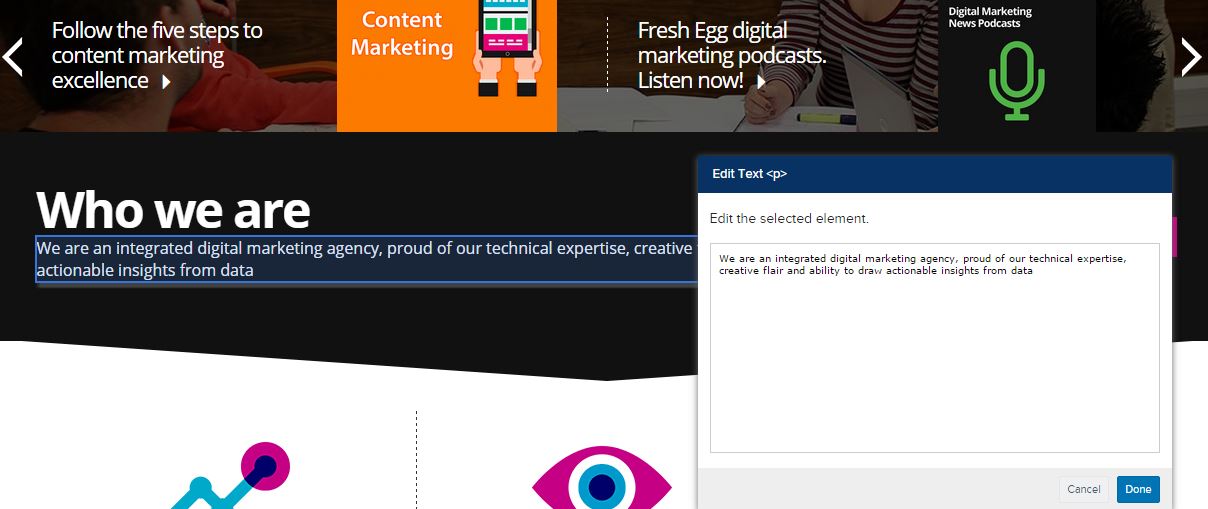

Modern split testing platforms make it easy for people to set up their own A/B tests. Optimizely’s WYSIWYG editor requires no development skills and supports a whole host of ways to modify an existing site.

Optimizely’s WYSIWYG editor makes it easy to set up simple tests

Regardless of how advanced testing platforms have become, there will always be times when something completely new is required and calls for advanced programming skills – a new widget perhaps, a product comparison tool, or integrations with a backend system. These are likely to be outside the typical abilities of a conversion optimiser and require input from developers.

Analytics integration

Reliable data is the key to devising a well-planned and successful testing program. To get this accurate data, experiments must be tightly integrated with other platforms.

Developers may be best placed to make sure that analytics tracking (such as Google Analytics tracking code) is installed correctly alongside split testing tools, and that custom goals and revenue tracking are accurately recording data from sites.

How CRO can complement web development

Establishing what doesn’t convert

Finding out which elements on a page aren’t contributing to conversions and are causing unnecessary clutter is an important task. Too much clutter can generate noise around important CTAs and poor navigation is a sure way to have users going round in circles rather than down the conversion path.

Removing unnecessary elements from a site also simplifies the code base. As a result, maintaining the site becomes easier and future changes are less complicated.

CRO prevents developers focusing their efforts on improvements that will provide little benefit to the bottom line and allows them to concentrate on what really matters.

Early testing to save time and money

Wireframes, user testing and stakeholder surveys can all find problems with a design early on. As any experienced project manager will tell you, the later in a project you discover plans need changing, the more it costs.

CRO at the ‘discovery’ stage of a project can tell you if your project is heading in the right direction, saving wasted time in developing features that are not required.

What are the challenges?

CRO can complement design and development work to get great results. While the disciplines can work well together, the section below gives some areas to look out for to ensure that they combine rather than conflict.

Changes during testing

Data integrity is paramount in split testing and any changes to a page that your experiments are running on can invalidate the results. Worse, the changes may be inconsistent with the variation code and could make it impossible for your visitors to convert!

Changes should be carefully planned, with both CRO and development teams being aware of what the other is doing. It may be advisable to pause an experiment while updates are being implemented, just in case a problem is discovered. The development team can quickly roll back the change and experimental data will remain valid.

Great designs that don’t perform

Another difficultly is when a well-designed and visually stunning site doesn’t convert. It can be difficult both for those who were involved in creating the design and also key stakeholders who have made an investment and are expecting a good return.

If you can be brave enough to test your designs then you will be rewarded in the long run. Once you have learned why the new designs didn’t work, and it could be something quite small – a misplaced CTA on a key page perhaps – then the problem can be fixed and the results should improve.

Testing alpha releases

One of the benefits of CRO mentioned earlier was that testing early on can cut down on making costly changes later. The downside to this is that the testing may reveal bugs rather than usability issues.

While it is good to find bugs in alpha releases these should be uncovered during functional testing, not split testing. If bugs are causing issues during split testing then this will render the results invalid.

Split testing has to be performed to scientific standards and can be done when other forms of testing, such as functionality testing, have ironed out any initial design problems.

Introducing CRO into well-established development processes

Change requires effort, and people build trust in well-established and reliable processes. If the benefits of CRO aren’t understood, it can be hard to convince anyone to add time and cost to a project. The reasons behind introducing CRO into the design and development process need to be made clear early on to minimise any resistance from stakeholders.

Change control

CRO teams need to try to fit in and understand existing processes and feed any successful variations into the development change control processes. They should also share the proposed variations before setting them live and allow internal teams to see them in a preview mode. Clear communication here is key to ensure that CRO and design and development teams work well together to achieve the best results.

Summary

The design and development of a website plays a big part in its ability to convert users into customers, while the number of conversions is regularly used as a measure of success for the design of a new site. This means that optimisers and designers and developers will often share the same objectives and will be working towards the same goal. These disciplines should complement each other to create a highly optimised site, ensuring that everyone sees the benefits of working together.

From a CRO perspective, input from creative designers and brilliant developers will help to create better A/B tests, leading to great results.

Find out more about our conversion services and website design.