Webmaster Tools: Get URLs Indexed Faster with Google’s Fetch as Googlebot

By Intern |4 Aug 2011

Made important website design changes? Fixed an SEO problem? Redirected a crucial page? Doing Testing and need a quicker turnaround? Optimising for an event or key-term that is time limited? Published something you wish you hadn’t? Fetch as Googlebot just made your life a lot easier.

Google has announced this great new feature in webmaster tools, allowing webmasters to submit new or updated URLs for faster indexing. Fetch as Googlebot is not a particularly catchy title, but constitutes a long-overdue feature which takes some of the mystery and guess work out of getting content indexed fast.

For everyone who’s been getting pages indexed quickly by tweeting, re-tweeting and using social bookmarking; these social media activities are benefiting your search engine optimisation in more ways than just persuading the Googlebot to come and visit - so don’t stop yet. But... do log in to webmaster tools and check fetch as Googlebot out asap.

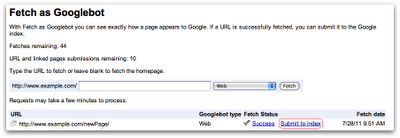

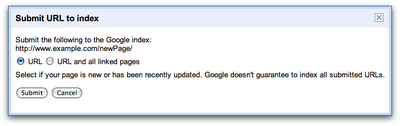

After you ‘fetch a URL as Googlebot’ you are given the option to submit that URL to the Google index, and in a rare bit of Google transparency they even state that URLs should be crawled within a day.

The service comes with the usual caveats and is not a guarantee of indexing, with Google stating that submitted URLs will still be evaluated in the usual way.

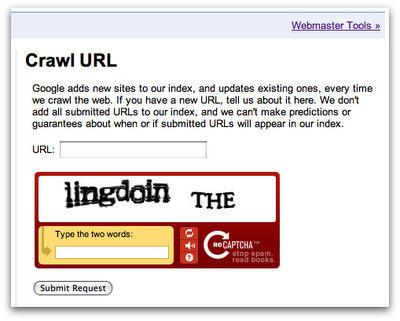

To avoid a deluge of indexing requests Google has set a limit of 50 submissions per week. However there is an option to submit a URL and all its linked pages at once, which is limited to 10 submissions per month. There is also a public version of fetch as Googlebot offering URL submission without verifying ownership. What used to be known as the ‘add your URL to Google’ form is now the Crawl URL form, which has the same quota limitations as the webmaster’s version;

It’s worth noting that fetch as Googlebot is aimed at mainly text-based standard web content; if you want to get your video or image files indexed fast then Google sitemaps should still be your first port of call.